Ethics in artificial intelligence is crucial in today’s rapidly advancing technological landscape. It’s a multifaceted field exploring the moral implications of AI development and deployment, from bias and fairness to privacy and transparency. This exploration delves into the complex considerations surrounding accountability, societal impacts, and the future of AI.

This discussion examines the core ethical challenges in various applications of AI, including healthcare, autonomous systems, and environmental impact. Understanding these issues is vital for creating responsible and beneficial AI systems that align with human values.

Defining AI Ethics

Artificial intelligence (AI) is rapidly transforming various aspects of our lives, from healthcare to transportation. However, this rapid advancement necessitates a careful consideration of ethical implications. AI systems, while capable of remarkable feats, can also perpetuate biases, infringe on privacy, and pose existential risks if not developed and deployed responsibly. This section delves into the core principles and considerations surrounding ethical AI.Ethical considerations in AI encompass a broad spectrum of principles and concerns, aiming to ensure that AI systems are developed and used in a way that aligns with human values and promotes the common good.

This includes considerations of fairness, transparency, accountability, and privacy, amongst other crucial aspects. Understanding these principles is vital for building trustworthy and beneficial AI systems.

Key Principles Guiding Ethical AI

The development and deployment of AI systems must adhere to fundamental ethical principles. These principles, while not exhaustive, provide a robust framework for guiding ethical AI practices. They often intersect and are not mutually exclusive.

- Fairness: AI systems should treat all individuals and groups equitably, without perpetuating or amplifying existing societal biases. This necessitates careful consideration of data used to train AI models and ongoing evaluation for fairness. For instance, an AI system used for loan applications must not discriminate against specific demographic groups based on protected characteristics.

- Transparency: The inner workings of AI systems should be understandable and explainable to humans. This principle is crucial for accountability and trust. A medical diagnosis system, for example, should explain its reasoning, allowing clinicians to assess the reliability and validity of the diagnosis.

- Accountability: Clear mechanisms for responsibility and accountability should be established for the development, deployment, and use of AI systems. This involves determining who is responsible when AI systems cause harm. A self-driving car that causes an accident, for example, necessitates a clear process for determining liability.

- Privacy: AI systems should respect and protect individual privacy. Data used to train or operate AI systems should be collected and used ethically and securely, with appropriate safeguards in place. This is particularly critical for AI systems that collect and analyze personal data, such as social media analysis.

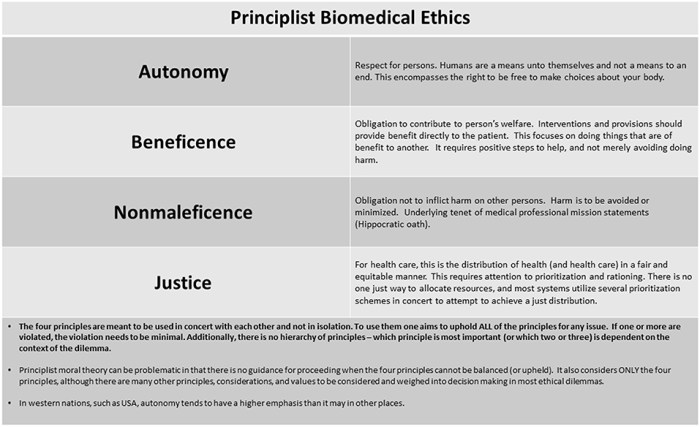

- Beneficence: AI systems should be designed and used to benefit humanity, promoting human well-being and avoiding harm. AI in healthcare, for example, should be used to improve patient outcomes and not to exploit vulnerable populations.

Ethical Guidelines vs. Regulations

Ethical guidelines and regulations for AI serve different but complementary purposes. Guidelines provide broad principles and recommendations, while regulations impose specific requirements and enforcement mechanisms. Guidelines are often developed by organizations and professional bodies, while regulations are often enacted by governments.

Ethical Dilemmas in AI Systems

AI systems can present complex ethical dilemmas. One example is the use of AI in criminal justice, where bias in data can lead to disproportionate outcomes. Another is the potential for autonomous weapons systems to cause unintended harm.

Core Ethical Concerns in AI

The table below Artikels core ethical concerns related to AI systems, including the issue, its description, potential impact, and potential mitigation strategies.

| Issue | Description | Impact | Mitigation Strategies |

|---|---|---|---|

| Bias in AI Systems | AI systems trained on biased data can perpetuate and amplify existing societal biases. | Unequal treatment, discrimination, and unfair outcomes for certain groups. | Careful data curation, diverse datasets, fairness metrics, ongoing monitoring and auditing. |

| Lack of Transparency | The inner workings of some AI systems are opaque, making it difficult to understand how decisions are made. | Reduced trust, lack of accountability, difficulty in identifying and correcting errors. | Explainable AI (XAI) techniques, clear documentation, transparent algorithms. |

| Privacy Concerns | AI systems often collect and analyze vast amounts of personal data, raising privacy concerns. | Data breaches, misuse of personal information, loss of control over personal data. | Robust data security measures, clear data privacy policies, user consent mechanisms. |

| Job Displacement | Automation driven by AI may lead to job displacement in certain sectors. | Economic disruption, social unrest, increased inequality. | Reskilling and upskilling programs, support for displaced workers, responsible automation strategies. |

Bias and Fairness in AI

AI systems, trained on vast datasets, can inadvertently inherit and amplify existing societal biases. This inherent bias can lead to unfair or discriminatory outcomes, impacting various aspects of life, from loan applications to criminal justice. Understanding and mitigating these biases are crucial for ensuring the ethical deployment of AI.

Potential Sources of Bias in AI Algorithms

AI algorithms are susceptible to bias stemming from various sources. Data used to train these algorithms can reflect existing societal inequalities, leading to skewed results. Algorithmic design choices, such as the specific features selected for analysis, can also inadvertently introduce bias. Furthermore, the human developers themselves, with their own implicit biases, may unconsciously influence the development process, resulting in biased AI models.

This interconnectedness of data, algorithms, and human intervention highlights the multifaceted nature of bias in AI.

Methods to Mitigate Bias in AI Systems

Several strategies can help mitigate bias in AI systems. These include carefully curating and pre-processing training data to identify and address potential biases. Employing diverse and representative datasets is crucial for creating more accurate and fair models. Algorithmic design choices should prioritize fairness and consider potential biases introduced by the selected features. Continuous monitoring and evaluation of AI systems, along with feedback loops, can identify and rectify any emerging biases.

A crucial aspect is the inclusion of diverse perspectives from stakeholders throughout the development process.

Comparison and Contrast of Fairness Metrics in AI

Various fairness metrics are employed to assess and quantify bias in AI systems. Some metrics focus on disparate impact, measuring whether a particular outcome is disproportionately affected by the algorithm. Others emphasize equal opportunity, ensuring that individuals with similar characteristics have similar probabilities of receiving a favorable outcome. The choice of metric depends on the specific application and the desired fairness criteria.

Different metrics may yield different results, underscoring the need for careful consideration when selecting and interpreting fairness measures.

Examples of AI Systems Exhibiting Bias

Numerous examples exist of AI systems exhibiting bias. Loan applications have been shown to discriminate against minority groups. Facial recognition systems have demonstrated a higher error rate for people of color. These examples highlight the potential for AI to perpetuate existing societal biases if not carefully managed. A key takeaway is the need for comprehensive evaluations to detect and address biases in AI systems.

Table of Bias Types, Examples, and Mitigation Strategies

| Bias Type | Example | Description | Mitigation Strategy |

|---|---|---|---|

| Algorithmic Bias | A facial recognition system misclassifies darker-skinned individuals more frequently. | Bias embedded within the algorithm’s design or training process. | Employing algorithms designed to minimize bias, using diverse and representative datasets, and auditing the algorithm for biases. |

| Data Bias | A loan application dataset predominantly features applications from individuals of a specific socioeconomic background. | Bias inherent in the training data used to develop the AI system. | Gathering a more diverse and representative dataset, carefully pre-processing data to remove biases, and ensuring data quality and accuracy. |

| Societal Bias | An AI system used for parole prediction perpetuates existing racial disparities in the criminal justice system. | Bias stemming from societal prejudices and inequalities reflected in the data or the application itself. | Analyzing the impact of the AI system on different demographic groups, actively involving stakeholders from diverse backgrounds, and developing policies to mitigate the societal impact. |

Privacy and Data Security

Protecting user data is paramount in the development and deployment of AI systems. AI models often rely on vast datasets, raising significant concerns about the privacy and security of the information contained within. Robust mechanisms are needed to safeguard user data from unauthorized access and misuse, while enabling the development and deployment of beneficial AI applications.AI systems frequently process sensitive personal information, and this information needs to be handled with the utmost care.

Compromising data security can have serious consequences, including financial losses, reputational damage, and legal repercussions. Maintaining user trust in AI systems hinges on the assurance that their data is handled responsibly and ethically.

Importance of Data Privacy in AI

Protecting user privacy is fundamental to ethical AI development. Individuals have a right to control their personal information, and AI systems must respect these rights. Breaches of privacy can lead to significant harm, including identity theft, discrimination, and emotional distress. Protecting user data not only safeguards individuals but also fosters public trust in AI technologies. By prioritizing data privacy, we create a more equitable and responsible AI ecosystem.

Methods for Ensuring Data Security and Privacy in AI Systems

Ensuring data security and privacy in AI systems requires a multifaceted approach. Robust data security measures are critical to prevent unauthorized access, use, or disclosure. These measures should incorporate encryption, access control, and data anonymization techniques. Furthermore, regular security audits and incident response plans are essential for mitigating potential threats and vulnerabilities.

Challenges in Balancing Privacy with AI Development

Balancing privacy with the need for data in AI development presents a complex challenge. AI systems often require large datasets for training and operation, which can pose a conflict with individuals’ privacy concerns. Finding a balance between enabling innovation and safeguarding privacy is a key ethical consideration in AI development. Careful consideration of the types of data used, the methods for collecting and storing it, and the measures to protect it are vital to address this challenge.

Role of Anonymization and Pseudonymization in Protecting User Data

Anonymization and pseudonymization are crucial techniques for protecting user data in AI systems. Anonymization removes identifying information from data, making it impossible to link it back to a specific individual. Pseudonymization, on the other hand, replaces identifying information with pseudonyms, allowing for data linkage but without revealing the true identity of the individual. Both methods play a vital role in preserving user privacy while enabling the use of data for training and development.

Data Security Measures for AI Systems

| Security Measure | Pros | Cons | Examples |

|---|---|---|---|

| Encryption | Protects data confidentiality by converting it into an unreadable format. | Can be computationally expensive, and decryption keys must be managed securely. | HTTPS, end-to-end encryption |

| Access Control | Restricts access to data based on user roles and permissions. | Requires careful implementation to avoid loopholes and unauthorized access. | Role-based access control, multi-factor authentication |

| Data Anonymization | Removes identifying information from data, reducing privacy risks. | May limit the usefulness of the data for certain AI tasks. | Removing names, addresses, and other identifiers. |

| Data Pseudonymization | Replaces identifying information with pseudonyms, enabling data linkage without revealing true identities. | Requires careful management of pseudonymization schemes to prevent re-identification. | Using unique identifiers for individuals. |

| Secure Data Storage | Protects data from physical and environmental threats. | Requires robust physical security measures and data backups. | Data centers with physical security, secure cloud storage. |

Transparency and Explainability

Transparency in AI systems, and the ability to understand how they arrive at decisions, is becoming increasingly crucial. The lack of transparency can breed mistrust and hinder the responsible deployment of these technologies. Understanding the “black box” nature of some AI algorithms is a significant challenge, particularly when those algorithms are used in high-stakes applications like loan approvals or criminal justice.

Concept of Transparency in AI Systems

AI systems exhibit transparency when their decision-making processes are understandable and auditable. This means that the rationale behind a particular output can be traced back to the input data and the model’s internal workings. Ideally, users should be able to grasp how an AI system reached a specific conclusion, without requiring extensive technical expertise. This understanding is fundamental for building trust and identifying potential biases.

Ethical considerations are crucial in AI development, especially as AI tools like AI tools for productivity become more prevalent. These tools promise efficiency gains, but we must ensure they are used responsibly and don’t inadvertently perpetuate biases or erode human agency. Ultimately, a strong ethical framework is vital to ensure AI’s beneficial impact.

Importance of Explainable AI (XAI)

Explainable AI (XAI) aims to bridge the gap between complex AI models and human understanding. By providing insights into the decision-making process, XAI fosters trust and accountability. This is particularly important in high-stakes domains, where the consequences of AI-driven decisions can be substantial. XAI methods can identify and mitigate biases in data, algorithms, and decision-making processes.

Methods for Improving Transparency

Various methods can enhance the transparency of AI decision-making. One approach involves developing AI models with inherent transparency, such as rule-based systems or linear models. Another strategy is to employ explainable algorithms that provide insights into the model’s decision-making process, like decision trees or linear regression. Furthermore, visualization techniques can be used to interpret complex patterns in data and the relationships between input variables and outputs.

Finally, clear documentation of the data used, the model’s architecture, and the training process is vital.

Examples of AI Systems Lacking Transparency

Many AI systems, particularly deep learning models, operate as “black boxes.” These systems, often used in image recognition, natural language processing, and fraud detection, can produce accurate results without providing a clear explanation of how they arrived at those conclusions. For instance, a facial recognition system might correctly identify a person, but fail to explain why it made that particular identification.

This lack of transparency can lead to concerns about fairness and accountability.

Table of AI Systems and Transparency

| AI System | Decision-Making Process | Explanation Mechanism | Evaluation of Transparency |

|---|---|---|---|

| Credit Scoring Model | Evaluates applicant data based on complex algorithms | Simplified rule-based explanations for scoring criteria | Fairly transparent; users can understand the factors influencing their score. |

| Image Recognition System | Uses deep learning to classify images | Visualizations of activation maps to highlight relevant features | Limited transparency; explaining the reasons for classification can be challenging. |

| Medical Diagnosis AI | Analyzes patient data to suggest diagnoses | Explanation based on probability scores for different conditions | Moderate transparency; users may not fully understand the rationale behind the diagnosis. |

| Autonomous Vehicle | Processes sensor data to navigate | Detailed explanations of the sensor inputs, calculations, and decisions. | Transparency depends on the level of detail available. Requires more advanced methods for explanation. |

Accountability and Responsibility

Establishing accountability and responsibility within AI systems is crucial for fostering trust and ensuring ethical deployment. This necessitates a clear understanding of who is responsible when AI systems make mistakes or cause harm, alongside the development of mechanisms to mitigate such risks. The inherent complexity of AI systems, often involving intricate algorithms and vast datasets, demands a nuanced approach to determining responsibility.

Defining Accountability in AI Systems

Accountability in AI systems refers to the ability to identify and assign responsibility for actions taken by or resulting from the use of these systems. This extends beyond simply identifying the programmer or developer but also considers the broader ecosystem encompassing data providers, users, and organizations deploying the AI. A key aspect is establishing clear lines of communication and recourse when AI systems cause harm or make errors.

Ultimately, accountability ensures transparency and fairness in AI decision-making.

The Role of Humans in Ethical AI

Humans play a vital role in ensuring ethical AI development and deployment. This responsibility extends to the design phase, where ethical considerations should be integrated into the development process. Moreover, continuous monitoring and oversight of AI systems are essential to detect and address potential biases or harmful outcomes. Furthermore, robust human oversight is necessary to prevent misuse or unintended consequences.

Education and training programs are crucial for building human capacity to effectively manage and govern AI systems.

Assigning Responsibility in AI Systems

Assigning responsibility in AI systems requires a multifaceted approach. Clear protocols and guidelines are essential for defining responsibility across the AI lifecycle, from data collection to deployment. Transparency in the decision-making process of AI systems is paramount. This involves documentation of the algorithms, data sources, and decision-making logic. Furthermore, establishing clear lines of communication and escalation procedures are vital to ensure prompt response to incidents.

Comparing Models of Accountability

Different models of accountability for AI systems exist, each with its own strengths and weaknesses. Legal accountability focuses on establishing legal frameworks and penalties for AI-related harm. Ethical accountability, conversely, emphasizes the moral obligations and principles guiding AI development and deployment. The combination of both legal and ethical considerations provides a more comprehensive framework for addressing the complexities of AI accountability.

It is important to consider that these models are not mutually exclusive; they can be complementary.

Table of AI Accountability Examples

| AI System | Incident | Responsible Party | Resolution Strategy |

|---|---|---|---|

| Autonomous vehicle | Accident involving pedestrian | Vehicle manufacturer, programmer, data provider | Investigation into the algorithm’s decision-making, retraining of the system, potential legal action depending on the severity and outcome of the incident. |

| Loan application AI | Refusal of loan to a qualified applicant | Loan company, AI developer, data provider | Analysis of the algorithm for bias, adjustments to the system, compensation to the applicant, potential legal action |

| Medical diagnosis AI | Incorrect diagnosis of a patient | Hospital, AI developer, medical staff | Investigation into the algorithm’s accuracy, retraining of the system, review of the patient’s treatment plan, potentially legal action if harm resulted. |

| Recruitment AI | Discrimination against certain demographic groups | Company using the system, AI developers, data providers | Bias detection and mitigation, retraining of the system, implementation of human review processes, potential legal action |

Societal Impacts of AI

Artificial intelligence is rapidly transforming various aspects of society, presenting both promising opportunities and significant challenges. Understanding the potential societal impacts of AI, including its effects on employment, inequality, and overall well-being, is crucial for navigating this evolving landscape responsibly. The ethical considerations surrounding these impacts demand careful consideration and proactive measures to mitigate potential harms while maximizing benefits.

Potential for Job Displacement

The automation capabilities of AI raise concerns about potential job displacement across various sectors. While AI can automate routine tasks, freeing up human workers for more complex and creative roles, the transition can be disruptive. Existing models predict that certain professions, such as data entry, transportation, and manufacturing, will be significantly impacted. This necessitates proactive strategies for workforce retraining and upskilling, ensuring that individuals can adapt to the changing job market and acquire new skills to remain competitive.

Strategies for reskilling and upskilling programs, as well as financial support for affected workers, are essential to address potential job losses.

Exacerbation of Societal Inequalities

AI systems, trained on existing data, can inadvertently perpetuate and even amplify existing societal biases. If training data reflects historical inequalities, the resulting AI models can reproduce and amplify these biases in their outputs, leading to unfair or discriminatory outcomes in areas such as loan applications, criminal justice, and hiring processes. This requires careful attention to data quality and fairness in AI development and deployment.

Rigorous evaluation processes and continuous monitoring are essential to detect and mitigate bias.

AI Applications for Addressing Societal Challenges

AI offers powerful tools to tackle complex societal problems. For instance, AI-powered diagnostic tools can improve healthcare access and outcomes, while AI-driven solutions can enhance disaster response and aid in environmental monitoring. AI can also facilitate personalized education and support vulnerable populations.

Positive and Negative Impacts on Different Groups in Society

| Group | Potential Positive Impacts | Potential Negative Impacts | Examples |

|---|---|---|---|

| Workers | Creation of new jobs in AI-related fields, automation of repetitive tasks, increased productivity | Job displacement in certain sectors, widening skill gaps, potential for wage stagnation or decline | Rise of data scientists, AI engineers, and AI ethicists; automation of assembly line work; increased efficiency in logistics. |

| Businesses | Improved efficiency, reduced costs, enhanced decision-making capabilities, new revenue streams | Increased reliance on technology, potential for job displacement, need for significant investments in AI infrastructure and training | AI-powered customer service chatbots, optimized supply chains, predictive maintenance models. |

| Governments | Improved public services, enhanced security, better resource management, evidence-based policymaking | Potential for increased surveillance, concerns about algorithmic bias, lack of transparency in AI decision-making processes | AI-powered crime prediction models, improved traffic management systems, optimized resource allocation in disaster relief. |

| Individuals | Personalized healthcare, customized education, improved access to information, greater convenience | Privacy concerns related to data collection and use, potential for manipulation through targeted advertising, reliance on technology that could lead to social isolation | Personalized medicine, online learning platforms, recommendation engines for products and services. |

AI in Healthcare and Medicine: Ethics In Artificial Intelligence

Artificial intelligence (AI) is rapidly transforming the healthcare landscape, offering potential benefits across various medical domains. From diagnostics to treatment planning, AI’s capabilities are expanding at an impressive pace, prompting crucial ethical considerations. This section delves into the multifaceted role of AI in healthcare, exploring both its potential and the associated ethical challenges.AI’s application in healthcare is diverse and expanding.

AI algorithms can analyze vast datasets of medical images, patient records, and research findings to identify patterns and predict outcomes. This capability extends to areas like drug discovery, personalized medicine, and robotic surgery.

Use of AI in Healthcare

AI is being employed in various ways to enhance healthcare. AI systems can analyze medical images (X-rays, CT scans, MRIs) to detect anomalies with higher accuracy and speed than human radiologists. These systems can also assist in diagnosing diseases like cancer by identifying subtle patterns in medical data that might be missed by the human eye. Furthermore, AI-powered tools can predict patient risk factors, personalize treatment plans, and support drug discovery efforts.

Ethical Concerns Specific to AI in Healthcare

Ethical concerns are paramount when considering the use of AI in healthcare. One primary concern is algorithmic bias, where AI systems trained on biased data can perpetuate existing health disparities. Another critical concern is the lack of transparency in some AI algorithms, making it difficult to understand how decisions are reached. This opacity can erode trust and hinder the ability to identify and correct errors.

Data privacy and security are also significant issues, as healthcare data is highly sensitive and vulnerable to breaches. Accountability for errors in AI-assisted diagnoses and treatments is another pressing concern.

Potential Benefits and Risks of AI in Medical Diagnosis and Treatment

AI’s potential benefits in medical diagnosis and treatment are substantial. Improved accuracy and speed in diagnostics can lead to earlier and more effective interventions, potentially saving lives. Personalized treatment plans, tailored to individual patient characteristics, can enhance treatment efficacy and minimize side effects. AI can also accelerate drug discovery and development, potentially leading to new treatments for various diseases.

However, the risks are equally important. Errors in AI-assisted diagnoses can have severe consequences, potentially leading to misdiagnosis or inappropriate treatment. The reliance on AI systems could lead to a decline in human oversight and critical thinking, and a loss of the human touch in patient care.

Examples of Ethical Dilemmas in AI-Assisted Medical Procedures

Examples of ethical dilemmas in AI-assisted medical procedures include situations where AI systems recommend a treatment that conflicts with a physician’s judgment or intuition. Another scenario arises when AI systems produce a diagnosis that is different from a human physician’s, and determining whose interpretation is more accurate can be challenging. Data privacy concerns also arise in situations where AI systems process sensitive patient data, and the security of this data becomes paramount.

Ultimately, the ethical use of AI in healthcare requires a careful balance between leveraging its potential and mitigating the associated risks.

Potential Ethical Concerns of AI in Specific Healthcare Contexts

| Healthcare Context | Bias and Fairness | Privacy and Security | Transparency and Explainability |

|---|---|---|---|

| Diagnosis | AI systems trained on biased data might perpetuate existing health disparities, leading to inaccurate diagnoses for certain populations. | Patient data used to train and operate AI diagnostic tools must be handled securely and privately to avoid breaches. | Lack of transparency in diagnostic algorithms can hinder physician understanding and trust in AI recommendations. |

| Treatment | AI-driven treatment recommendations might not consider individual patient contexts, leading to unequal access or unsuitable interventions. | Secure storage and transmission of patient data used in AI-driven treatment plans are crucial to prevent unauthorized access. | The complexity of AI algorithms used in treatment planning can make it challenging to explain the rationale behind recommendations. |

| Drug Discovery | AI-driven drug discovery processes might overlook certain populations or fail to consider diversity in human health, resulting in biased drug development. | Sensitive data collected during drug development must be protected to maintain patient privacy and data security. | Transparency in AI-driven drug development processes is essential for accountability and to ensure ethical drug design and testing. |

AI in Autonomous Systems

Autonomous systems, encompassing vehicles, robots, and drones, are rapidly evolving, presenting significant ethical challenges. Their increasing sophistication raises critical questions about accountability, safety, and the potential for unintended consequences. The development and deployment of these systems require careful consideration of ethical principles to ensure responsible and beneficial outcomes.

Ethical Implications of Autonomous Systems

Autonomous systems operate without direct human intervention, making decisions based on complex algorithms and sensor data. This raises concerns about the fairness, transparency, and accountability of their actions. Ethical considerations must address the potential for bias in algorithms, the lack of human oversight in critical situations, and the need for clear guidelines regarding liability and responsibility. Moreover, the integration of these systems into society necessitates a comprehensive understanding of their societal impact, including potential job displacement and changes in infrastructure.

Ethical Guidelines in Autonomous Systems

Establishing ethical guidelines is crucial for ensuring the responsible development and deployment of autonomous systems. These guidelines must encompass diverse aspects, from safety and reliability to privacy and security. Robust testing procedures, rigorous validation processes, and continuous monitoring are essential to minimize the potential for harm. Furthermore, clear frameworks for accountability and liability in case of accidents or malfunctions are vital to building public trust and ensuring the safe integration of autonomous systems into our lives.

Potential for Harm in Autonomous Systems

Autonomous systems, while promising, carry the potential for harm. Errors in algorithms, malicious hacking, or unforeseen circumstances can lead to accidents, injuries, or even fatalities. The complexity of these systems and the lack of human intervention in critical situations can amplify the risk of harm. For example, an autonomous vehicle might make a wrong decision in a complex traffic situation, potentially causing an accident.

The impact of such incidents can be severe and far-reaching.

Examples of Ethical Dilemmas in Autonomous Systems

Autonomous systems face numerous ethical dilemmas, often involving trade-offs between competing values. For instance, an autonomous vehicle encountering a pedestrian and a cyclist in a sudden, unpredictable situation must prioritize one over the other. Similarly, autonomous robots operating in disaster zones or hazardous environments must make decisions about prioritizing human lives or completing tasks. These scenarios highlight the need for clear ethical guidelines and decision-making frameworks to guide autonomous systems in such challenging circumstances.

Table: Ethical Considerations in Autonomous Systems

| Autonomous System | Potential Harm | Mitigation Strategy | Evaluation Metrics |

|---|---|---|---|

| Autonomous Vehicles | Accidents caused by flawed algorithms, malicious attacks, or unexpected environmental conditions. | Rigorous testing protocols, robust safety systems, and continuous monitoring of performance. Developing algorithms that prioritize safety over speed in certain situations. | Accident rates, safety ratings, incident reports, and feedback from users. |

| Autonomous Weapons Systems | Unintended consequences, loss of human control, and potential for escalation of conflicts. | Strict regulations and international agreements prohibiting the deployment of autonomous weapons systems lacking human oversight. | Probability of unintended harm, level of human control, and potential for escalation. |

| Autonomous Surgical Robots | Surgical errors, device malfunctions, or inappropriate interventions. | Comprehensive training for operators, advanced error detection mechanisms, and rigorous testing protocols. | Surgical success rates, complication rates, and patient satisfaction. |

| Autonomous Agricultural Robots | Damage to crops or livestock, unintended environmental impacts, and displacement of agricultural workers. | Careful design and implementation to minimize environmental impact and optimize crop yields while preserving human jobs. | Yield increases, environmental impact analysis, and impact on employment. |

International Collaboration and Standards

International collaboration is crucial for establishing and enforcing ethical guidelines for AI development and deployment. A unified approach across nations fosters trust and prevents the creation of divergent ethical standards that could hinder global progress in AI. Furthermore, diverse perspectives and experiences contribute to a more robust and comprehensive ethical framework.

Importance of International Cooperation

International cooperation in AI ethics is essential to address the global nature of AI development and its potential impacts. A standardized approach across nations ensures that AI systems are developed and deployed responsibly, considering the diverse needs and values of different societies. This collaborative effort fosters trust and encourages shared best practices. Without international cooperation, fragmented approaches could lead to inconsistencies and potential conflicts in the ethical application of AI.

Need for Global Ethical Standards, Ethics in artificial intelligence

Global ethical standards are imperative for regulating the rapidly evolving field of AI. Such standards provide a common framework for evaluating and addressing potential ethical concerns related to AI development and use. These standards ensure accountability, promote transparency, and mitigate risks associated with biased or harmful AI systems. A lack of consistent ethical guidelines could lead to unforeseen consequences and uneven application of AI technologies globally.

Existing International Frameworks

Several international organizations and initiatives are actively working to establish frameworks for AI ethics. These frameworks often address key issues such as bias, fairness, privacy, and transparency. Examples include the OECD Principles on AI, which provide a set of guidelines for ethical AI development and use. These principles offer a starting point for discussion and implementation of AI ethics globally.

Improving Existing Frameworks

Existing international frameworks for AI ethics can be enhanced by incorporating more specific guidelines on practical implementation. Furthermore, these frameworks should be continuously reviewed and updated to reflect the evolving landscape of AI technology and its societal impacts. Greater emphasis on diverse perspectives and stakeholder engagement in the development and refinement of these frameworks will ensure their relevance and applicability across various contexts.

Table of International Organizations and Initiatives

| Organization/Initiative | Focus Area | Key Initiatives | Contact Information |

|---|---|---|---|

| OECD | AI Principles | Developing principles for trustworthy AI, covering areas like bias, transparency, and accountability. | www.oecd.org |

| UN | AI for Development | Exploring the use of AI to address global challenges, while incorporating ethical considerations. | www.un.org |

| EU | AI Act | Establishing a comprehensive legal framework for AI systems, including requirements for transparency and safety. | ec.europa.eu |

| IEEE | AI Ethics Standards | Developing technical standards and guidelines for AI systems, focusing on reliability, safety, and ethical considerations. | ieee.org |

AI and the Environment

AI’s burgeoning influence extends beyond the digital realm, impacting the environment in significant ways. From resource consumption to algorithm design, the environmental footprint of AI systems is a critical ethical concern. Understanding this footprint is paramount to developing responsible AI practices that minimize harm and maximize benefit.

Ethical considerations in AI are crucial, especially as AI chatbots like those featured in Best AI chatbots 2025 become more prevalent. These advanced tools raise important questions about bias, transparency, and accountability. Careful development and responsible deployment of these technologies are paramount to ensuring ethical AI practices.

Environmental Impact of AI

AI’s environmental impact stems from both the energy consumption of its hardware and the energy-intensive processes required for training complex algorithms. The sheer volume of data processed and the computational power demanded by sophisticated models contribute to considerable energy use. Furthermore, the manufacturing and disposal of AI hardware components have environmental implications. The production of semiconductors, for example, involves substantial energy use and material extraction, while the eventual disposal of these components can introduce hazardous materials into the environment.

Ethical Considerations of AI and Resource Consumption

The escalating demand for energy to power AI systems raises ethical concerns about resource consumption and sustainability. As AI adoption grows, it’s imperative to explore more sustainable practices and alternative energy sources to minimize the environmental burden. The ethical considerations extend to the design of AI algorithms, emphasizing energy efficiency and minimizing waste throughout the entire AI lifecycle.

One approach involves scrutinizing the energy intensity of different AI models and optimizing their designs to reduce their environmental impact.

Examples of AI for Environmental Solutions

AI presents opportunities to address pressing environmental challenges. For instance, AI-powered predictive models can help optimize energy grids, reducing waste and enhancing efficiency. Moreover, AI algorithms can assist in monitoring and managing natural resources, enabling more sustainable practices in agriculture, forestry, and water management. Specific applications include optimizing irrigation schedules in agriculture to conserve water and improving weather forecasting to mitigate climate change impacts.

Environmental Footprint of AI Algorithms and Hardware

The environmental impact of AI is multi-faceted. Training sophisticated AI models often demands substantial computing resources, leading to a significant carbon footprint. The manufacturing and disposal of AI hardware components also contribute to the environmental burden. A careful analysis of the entire AI lifecycle is essential to fully understand its impact. Consideration must be given to the materials used in manufacturing, the energy consumption of data centers, and the environmental impact of algorithm design.

Environmental Footprint Analysis of AI Systems

| AI System Component | Environmental Impact | Possible Solutions | Examples |

|---|---|---|---|

| Data Center Energy Consumption | High energy demand for training and operation | Transition to renewable energy sources, energy-efficient hardware | Using solar or wind power for data centers, implementing AI algorithms optimized for lower energy consumption |

| Algorithm Design | Computational complexity can lead to high energy use | Develop more efficient algorithms, reduce data redundancy | Using neural network pruning techniques to decrease the computational cost, employing more lightweight machine learning models |

| Hardware Manufacturing | Resource intensive, waste generation | Sustainable materials, circular economy models | Utilizing recycled materials in manufacturing, developing AI hardware with longer lifecycles and improved recyclability |

| Hardware Disposal | Potential for hazardous waste generation | Improved recycling processes, responsible e-waste management | Implementing stringent regulations for e-waste disposal, developing more durable and recyclable AI hardware components |

Future Trends in AI Ethics

The field of AI ethics is continuously evolving, driven by the rapid advancement of AI technologies and the increasing societal impact they have. Understanding future trends is crucial for proactively addressing potential challenges and maximizing the benefits of AI. This includes anticipating emerging ethical concerns and adapting existing frameworks to accommodate new technologies and applications.

Emerging Trends in AI Ethics

The development of more sophisticated AI systems, coupled with broader societal adoption, necessitates a continuous evolution of ethical guidelines. Several key trends are shaping the future landscape of AI ethics. These include the growing awareness of the potential for bias amplification in AI algorithms, the need for improved explainability and transparency in AI decision-making processes, and the increasing importance of robust accountability mechanisms for AI systems.

Predicting Future Challenges and Opportunities

Future challenges in AI ethics will arise from the expanding use cases of AI. These challenges will include the potential for AI to exacerbate existing societal inequalities, the difficulties in establishing clear lines of responsibility for AI-driven actions, and the need to safeguard human autonomy in an increasingly automated world. Opportunities exist to leverage AI to address critical societal problems, such as improving healthcare access, enhancing environmental sustainability, and fostering more inclusive communities.

AI ethics frameworks must anticipate these potential challenges and opportunities to ensure responsible AI development and deployment.

Need for Ongoing Reflection and Adaptation

AI ethics is not a static field; it requires ongoing reflection and adaptation to remain relevant. As AI technologies advance, new ethical considerations will emerge. This necessitates a dynamic approach to ethical guidelines, principles, and frameworks. Continuous dialogue, feedback, and revisions are crucial for ensuring that AI ethics evolves alongside the technology.

Role of Public Discourse in Shaping the Future of AI Ethics

Public discourse plays a vital role in shaping the future of AI ethics. Open dialogue among researchers, policymakers, ethicists, and the public is essential for identifying emerging ethical concerns and for fostering a shared understanding of the implications of AI. This engagement can lead to the development of more robust and adaptable ethical guidelines and regulations.

Potential Future Developments in AI Ethics

| Trend | Description | Impact | Mitigation Strategy |

|---|---|---|---|

| Bias Detection and Mitigation in Advanced AI Models | AI models will become increasingly complex, potentially amplifying existing biases in data. New techniques will be developed to detect and mitigate bias at various stages of the AI lifecycle. | Increased risk of unfair or discriminatory outcomes in areas like loan applications, hiring processes, and criminal justice. | Development of more sophisticated bias detection tools and techniques; the implementation of diverse datasets and the use of fairness-aware algorithms during model training. |

| Explainable AI (XAI) for Complex Systems | As AI systems become more intricate, the need for explainability will increase. Methods to interpret AI decision-making processes will be refined. | Improved trust and transparency in AI systems, leading to greater public acceptance and adoption. | Continued research into XAI methods, the development of standardized metrics for evaluating explainability, and the integration of XAI tools into AI development pipelines. |

| AI-Driven Healthcare and Personalized Medicine | AI will be used to improve healthcare outcomes and create personalized treatment plans. Ethical concerns about data privacy and patient autonomy will need to be addressed. | Potential for increased access to care, more accurate diagnoses, and tailored treatments, but also risks related to data security and the potential for misuse of sensitive patient information. | Stronger data privacy regulations, robust consent mechanisms, and enhanced security measures to protect patient data. Clear guidelines and protocols for using AI in healthcare must be established. |

| AI in Autonomous Systems and the Law | Autonomous systems, such as self-driving cars and drones, will become more prevalent. Determining liability and responsibility in cases of accidents or harm will require new legal frameworks. | Challenges in assigning liability and responsibility when autonomous systems make mistakes. | Development of international standards and legal frameworks for autonomous systems; proactive engagement with policymakers to address the legal and ethical implications of autonomous systems. |

Final Summary

In conclusion, ethical considerations are paramount in the development and implementation of artificial intelligence. The principles of fairness, transparency, and accountability must be central to every stage of AI design. This discussion highlighted the importance of ongoing dialogue and collaboration to navigate the challenges and opportunities of this transformative technology.

Top FAQs

What are some common biases in AI algorithms?

AI algorithms can inherit biases from the data they are trained on, potentially leading to discriminatory outcomes. This data bias can reflect societal prejudices, resulting in unfair or inaccurate predictions.

How can we ensure data privacy in AI systems?

Data anonymization, pseudonymization, and robust encryption protocols are crucial to safeguarding user data in AI applications. Strict access controls and secure storage are also vital.

What role do international standards play in AI ethics?

International cooperation and shared ethical standards are essential to mitigate the risks and maximize the benefits of AI across different cultures and regions.

How can AI be used to address environmental challenges?

AI can optimize resource use, predict environmental events, and support the development of sustainable solutions, contributing to a more environmentally conscious future.